|

He said, “We have more data than we can use, but we don’t know how to use it.” When Analytics India Magazine spoke to MetaAI’s Chief and the Guru of self-supervised learning, Yann LeCun-regarding data scarcity being the major bottleneck to the scaling of large models-emphasised that the issue is not data scarcity as much as it is data optimisation.

According to him, “The dataset sizes for text models have already peaked, simply because the S2N ratio will start declining.”Īs the researchers of the Data2vec 2.0 paper also say, “While the resulting models are excellent few-shot learners, the preceding self-supervised learning stage is far from efficient: for some modalities, models with hundreds of billions of parameters are trained, which often pushes the boundaries of what is computationally feasible”.

But, according to some researchers, we are approaching a shortage of good-quality data, especially considering that most of the text on the internet is duplicated.Īlong similar lines-in reference to the scale at which GPT-generated content is growing on the internet-Google’s François Chollet also said that the performance of generative models (which are based on LLMs) will start to degrade as it will start training on its own output. This proved to be a huge challenge when using self-supervised models for scaling LLMs since the issue was not only about having that amount of data but also requiring good-quality data to ensure high-quality output.

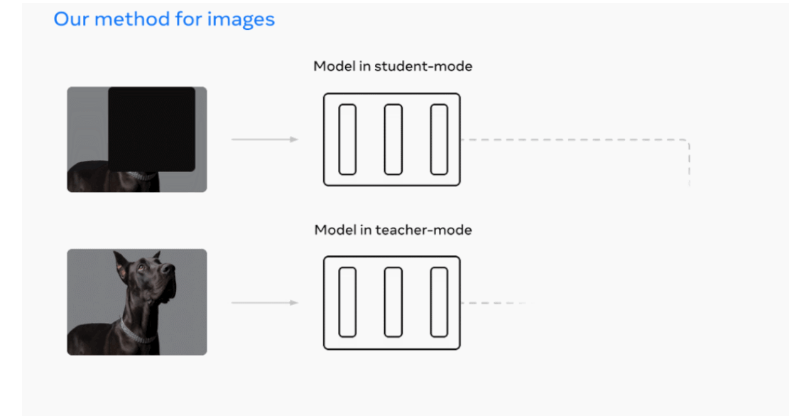

In this regard, while self-supervised learning achieved high-quality output without needing supervised labelled data, the amount of training data required for a single input function (text or image) was enormous. The current scaling laws by DeepMind, state that for a given compute budget, optimal performance is achieved by equally scaling the model size (parameters) and the number of training tokens (dataset size). This high-performance, single-purpose algorithm solves several limitations associated with self-supervised learning (SSL) models which are trained on a single input modality-such as images or text-and require a lot of computational power. The new and improved version can train self-supervised learning models up to 16x times faster for computer vision while maintaining similar accuracy to the most popular existing algorithm. Today, MetaAI announced the second version of data2vec, which addresses some of the challenges faced by self-supervised learning.ĭata2vec 2.0 is a self-supervised algorithm that works across three different modalities-speech, vision, and text. Earlier this week, AIM released a story, ‘ The Missing Link of Self-Supervised Learning’.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed